Corporate AI Ethics and Governance: Navigating the Multifaceted Nature of Shareholders and Stakeholders

As artificial intelligence (AI) transforms industries at an unprecedented pace, concerns about its ethics and governance are increasingly becoming a pressing issue for businesses. While the potential benefits of AI are vast, its adoption also raises significant challenges related to algorithmic bias, data ethics, and governance. In this article, we will explore the importance of corporate AI ethics and governance, and discuss the ways in which companies can effectively address these challenges.

Defining Corporate AI Ethics and Governance

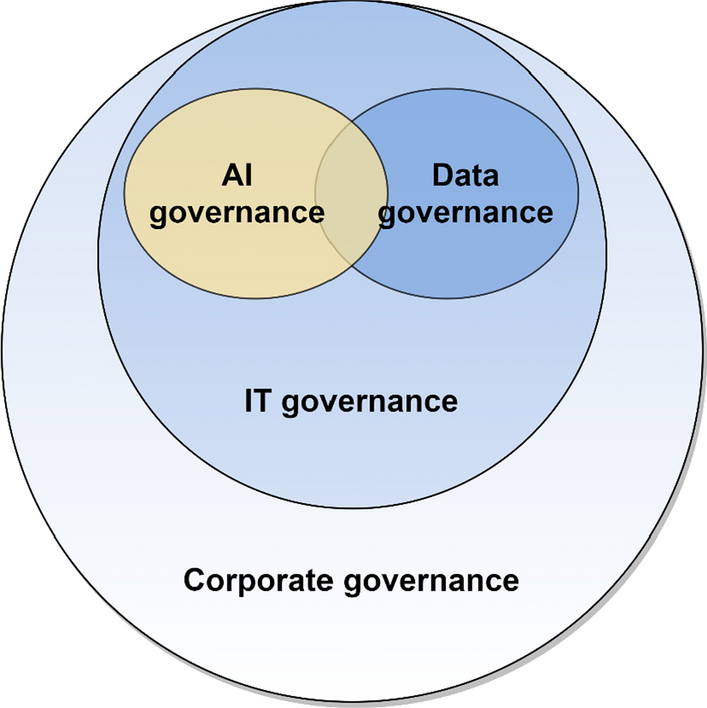

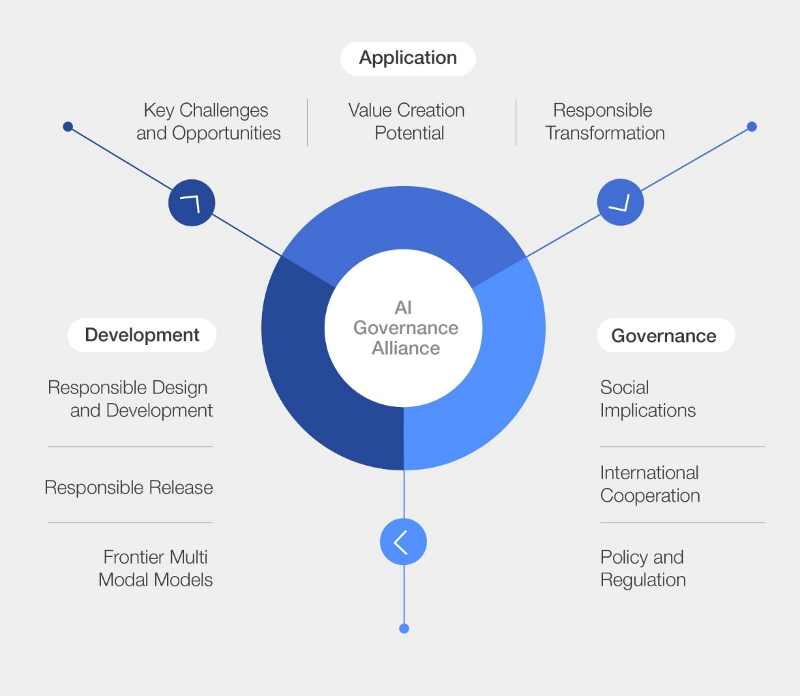

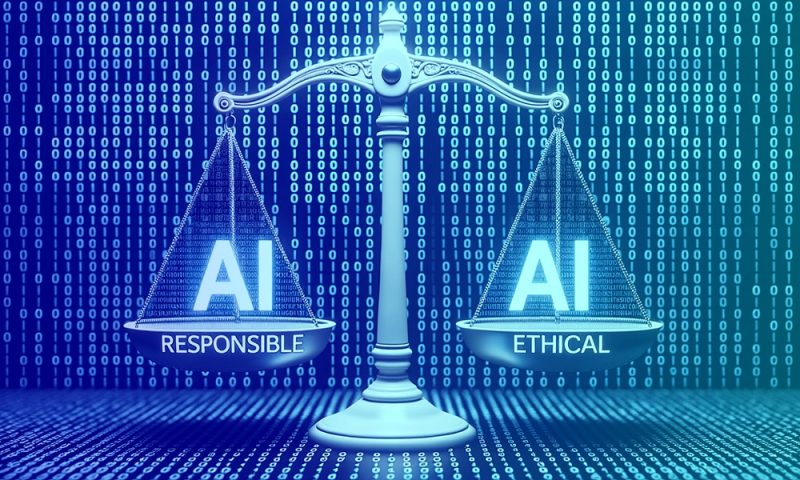

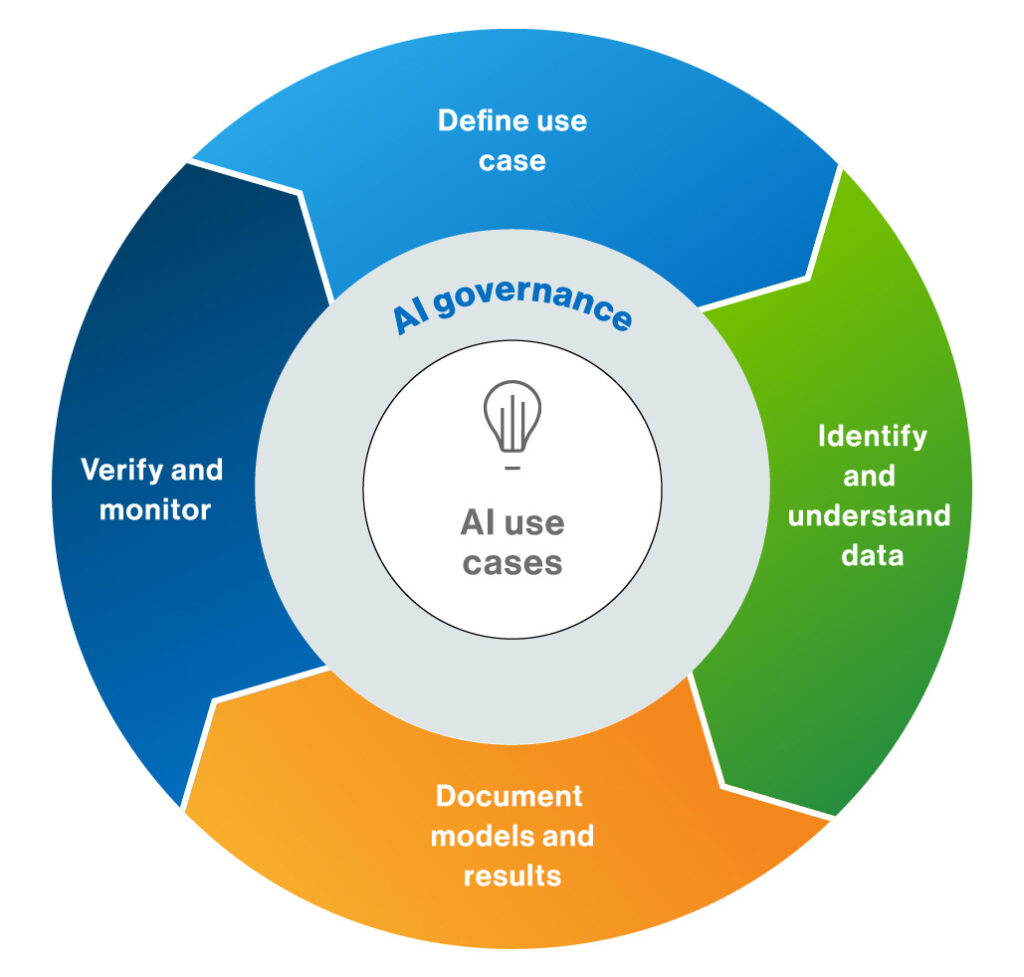

Corporate AI ethics refers to the moral principles that guide the development, deployment, and use of AI systems. In the business context, AI ethics intersects with corporate governance, stakeholder theory, corporate social responsibility (CSR), and data protection laws. Effective corporate AI governance involves creating a framework that ensures accountability, transparency, and fairness in the use of AI systems.

Challenges in Corporate AI Governance

The widespread adoption of AI-powered business analytics applications has introduced significant challenges related to algorithmic bias, data ethics, and governance. As organizations increasingly rely on machine learning and big data analytics for customer profiling, credit scoring, hiring decisions, and predictive analytics, concerns about bias, transparency, and accountability are growing. Moreover, the lack of clear AI governance frameworks is exposing companies to ethical, legal, and reputational risks.